How to Deploy with Kamal on a Single Server

What is Kamal

Kamal is a new deployment tool designed for the efficient rollout of containerized applications. It's developed by 37Signals, the creators of Basecamp and Hey.

Kamal provides zero-downtime deployments, rolling restarts, remote builds, and extensive service management. It automatically installs Docker, builds containers, pushes them to a container repository, and deploys the new versions on the servers.

Single server deployment

Most YouTube videos and blog posts focus on deploying across multiple servers (involving load balancers, application servers, and possibly database servers). However, I recently deployed using Kamal on a single server. I believe this article could be helpful if you find yourself in a similar situation.

If you're interested in seeing Kamal's multi-node deployment in action, below is a video of DHH giving a fast-paced and well-structured demo.

Install and deploy with Kamal

Install Kamal on your computer with: gem install kamal, then go to your project directory and run: kamal init. This command creates two folders in your project: .kamal and config/deploy.yml.

The .kamal folder contains hooks for before and after deployment:

Pre-connect hooks – run before Kamal locks for deployment and before connecting to the remote servers.

Pre-build hooks – executed before the build process begins, allowing for checks before building.

Pre-deploy hooks – happen just before the deployment process starts.

Post-deploy hooks – occur after a deployment, redeployment, or rollback.

Configuration

Kamal operates under the assumption that one image is used for one application. It adds the service name at the beginning of container names.

The primary configuration for Kamal is found in the servers directive. In this article, I'll include both a web container and a PostgreSQL container.

In the env section, we add both public (clear) and private (secret) configurations.

I'm developing on macOS, and I specify the architecture to Kamal in the builder directive.

# config/deploy.yml

# Name of your application.

service: my_app

image: username/image_name

# Deploy to these servers.

servers:

web:

hosts:

- server_public_ip_address

labels:

traefik.http.routers.your_domain.rule: Host(`your_domain.tld`)

traefik.http.routers.your_domain_secure.entrypoints: websecure

traefik.http.routers.your_domain_secure.rule: Host(`your_domain.tld`)

traefik.http.routers.your_domain_secure.tls: true

traefik.http.routers.your_domain_secure.tls.certresolver: letsencrypt

options:

network: "private"

registry:

# Specify the registry server

username: registry_username

password:

- KAMAL_REGISTRY_PASSWORD

env:

clear:

HOSTNAME: your_hostname

secret:

- KAMAL_REGISTRY_PASSWORD

- POSTGRES_PASSWORD

# Configure builder setup.

builder:

local:

arch: arm64

host: unix:///Users/<%= `whoami`.strip %>/.docker/run/docker.sock

Accessories: PostgreSQL

Any other auxiliary services are referred to as accessory. In this article, I'll be using PostgreSQL as one of the auxiliary services, but you can add as many as you need.

The configuration is self-explanatory, so I won't go into much detail.

# config/deploy.yml

...

accessories:

db:

image: postgres:16

host: server_public_ip_address

port: 5432

env:

clear:

POSTGRES_USER: "my_user"

POSTGRES_DB: 'my_app_prod'

secret:

- POSTGRES_PASSWORD

files:

- scripts/init.sql:/docker-entrypoint-initdb.d/setup.sql

directories:

- data:/var/lib/postgresql/data

We'll use the files directive to specify our entry point, and use scripts/init.sql for creating the database.

# scripts/init.sql

CREATE DATABASE my_app_prod;

Traeffik

Traefik manages TLS termination, HTTPS, redirects, and zero downtime deployments. We'll mount the acme.json file needed for Let's Encrypt and set up a Docker private network. The args are utilized here to define the entry points and details for the challenge.

# config/deploy.yml

...

traefik:

options:

publish:

- "443:443"

volume:

- "/letsencrypt/acme.json:/letsencrypt/acme.json"

network: "private"

args:

entryPoints.web.address: ":80"

entryPoints.websecure.address: ":443"

entryPoints.web.http.redirections.entryPoint.to: websecure

entryPoints.web.http.redirections.entryPoint.scheme: https

entryPoints.web.http.redirections.entrypoint.permanent: true

certificatesResolvers.letsencrypt.acme.email: "your_email_address"

certificatesResolvers.letsencrypt.acme.storage: "/letsencrypt/acme.json"

certificatesResolvers.letsencrypt.acme.httpchallenge: true

certificatesResolvers.letsencrypt.acme.httpchallenge.entrypoint: web

Manual steps

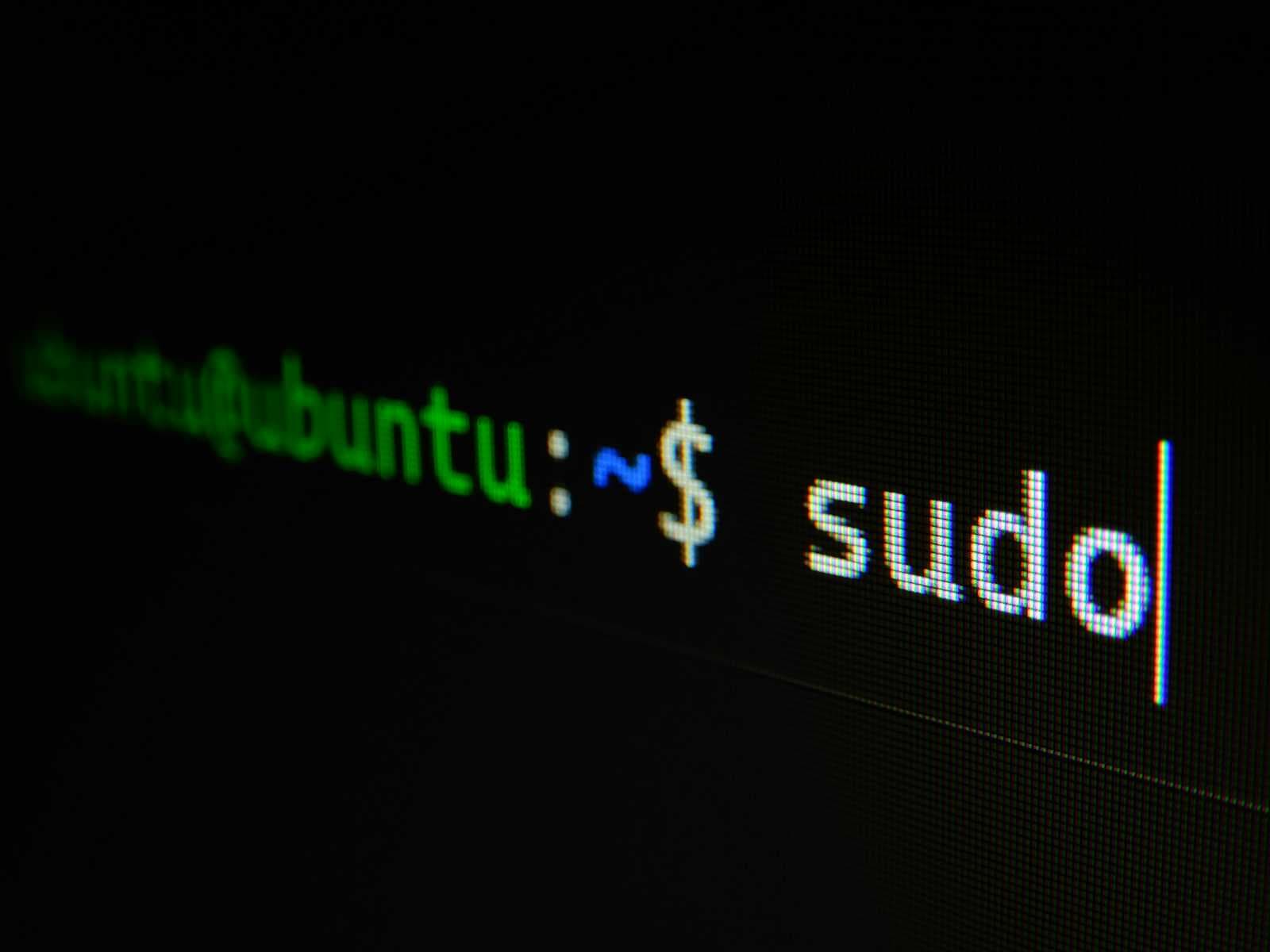

Before we start the deployment process, let's SSH into our server and execute a few commands:

Setup the directory for Let's encrypt

mkdir -p /letsencrypt && touch /letsencrypt/acme.json && chmod 600 /letsencrypt/acme.jsoncreate a Docker network called

privatedocker network create -d bridge private

Deploy

With the config file saved and everything set up on the server, it's time to start the deployment process.

kamal setup

This will:

Connect to the servers over SSH (using root by default, authenticated by your ssh key)

Install Docker and curl on any server that might be missing it (using apt-get): root access is needed via ssh for this.

Log into the registry both locally and remotely

Build the image using the standard Dockerfile in the root of the application.

Push the image to the registry.

Pull the image from the registry onto the servers.

Push the ENV variables from .env onto the servers.

Start a new container with the version of the app that matches the current git version hash.

Stop the old container running the previous version of the app.

Prune unused images and stopped containers to ensure servers don’t fill up.

Other commands you might find useful

kamal env push - push env var changes (won't restart services)

kamal redeploy

kamal app logs

kamal traefik logs

kamal app exec `python3 --version` # run a command on all your servers to check the Python version

kamal app exec -i bash - new container bash session

kamal details - gives you a snapshot of your containers, their status, and how they are performing

kamal rollback [previous_image_tag] - execute a rollback

kamal traefik reboot - reboot Traefik

kamal traefik reboot --rolling - a rolling reboot methodically restarts Traefik containers sequentially, ensuring no downtime and maintaining continuous load-balancing functionality

Deploying with Kamal on a single server streamlines the process of rolling out containerized applications, ensuring minimal downtime and optimal performance. Use this article as inspiration for your own configurations rather than as a strict guide.

Happy deploying!